If you’re new to mobile marketing, A/B testing can be a little bit intimidating. There’s a lot of information out there — experts study articles on who to target and what you should A/B test, but beginners might have a hard time getting started. How do I run my first A/B test? And how can I make sure it provides me with valuable data?

If you’re worried about running your first A/B, this guide will show you the way. In reality, the A/B testing process is quite simple: develop a hypothesis, choose your audience, collect data, and analyze the results. Let’s break those steps down to see just how stress-free A/B testing can be.

A/B Testing Step One: Develop a Hypothesis

Every good science experiment starts with a hypothesis. Without a hypothesis, it can be difficult to distil raw data into actionable insights.

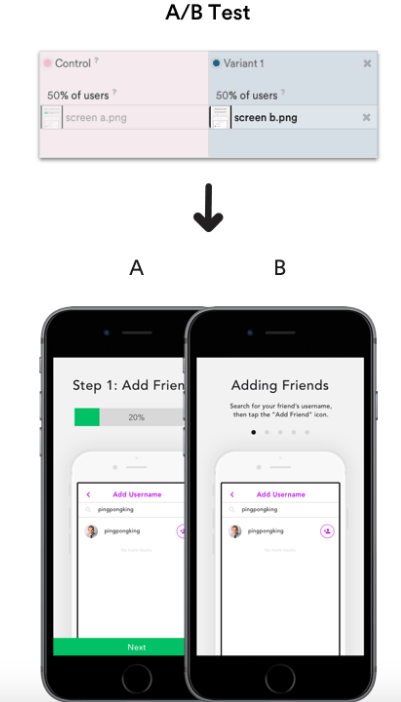

For example, a mobile app publisher might want to test the effectiveness of a new onboarding flow versus an old one. Perhaps the new flow is designed to be more visual and skimmable. In this scenario, the hypothesis might be that people will find it easier to parse the clean, visually appealing flow, resulting in more onboarding completions and a higher rate of Daily Average Users (DAU).

Depending on your goals, the hypothesis can be even more specific. Maybe the new onboarding flow will be more successful with one audience over another.

A/B Testing Step Two: Choose Your Audience

You don’t have to target your entire user base with every A/B test. In fact, you might see better results if you only test changes with specific segments of your users, assuming that your hypothesis takes this into account.

Keeping with the above example, perhaps you observe that one segment of your audience has disproportionately lower onboarding completions. You posit that a less text-heavy flow might help, so you rework the design. The hypothesis is that the new visuals will lead to a higher onboarding completion rate among the segment.

In this example, the audience was decided from the get-go. It was a problem with an audience that prompted the redesign in the first place. In other cases, you might start with product tweaks, and then A/B test those tweaks on different audiences to evaluate their effectiveness.

Either way, the hypothesis, and audience are entwined. Once you’ve decided on the problem you’re trying to solve, you’re ready to determine which user segment will help you solve it.

A/B Testing Step Three: Run the Test

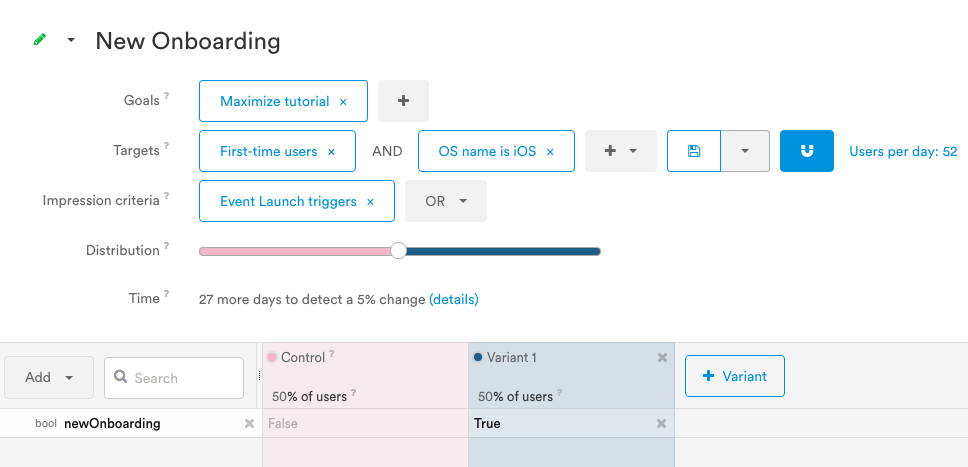

The process of setting up the A/B test will vary depending on your platform of choice. With Leanplum’s A/B testing solution, you can visually adjust all of the parameters from the test creation screen. Here’s an example of the dashboard:

In this example, our goal is to maximize completions of the “tutorial” event. We’ve noticed that our iOS users aren’t completing the onboarding as frequently as our Android users, so we’re only targeting first-time users on iOS. The experiment will begin on app launch, and 50 percent of the users will be shown the new onboarding flow.

Once all of the parameters are set, the software will tell you roughly how long it will take to detect a difference between the control and the variant. If the time to complete suits your needs, all that’s left is to hit Start.

A/B Testing Step Four: Analyze the Results

It’s been a few days, and your A/B test has been performed enough times to provide you with statistically significant data. What does this mean?

After collecting enough samples, the A/B testing software will calculate the odds that the difference between the two variants is not just a result of random chance. Once the odds pass a certain threshold (say, 95 percent), the change is deemed to be statistically significant, meaning that it was a change in the app that drove the difference in results.

The final step of an A/B test is to analyze the results. Was your hypothesis correct? Are any of the results surprising?

If the variant reached your goal while the control group didn’t, then congratulations! Your hypothesis was correct, and your A/B test was a success.

But the analysis doesn’t end there. It’s a good idea to examine all statistically significant changes, not just the ones you were tracking. For example, it would be problematic if your new onboarding flow increased onboarding completions, while decreasing day seven retention. This could indicate that users are clicking through the onboarding process without really understanding your app’s value proposition, leading to lower retention down the road.

A good analytics solution will usually do the heavy lifting for you. Leanplum’s analytics dashboard automatically surfaces statistically significant changes, so you can spend less time digging through your results. Instead, all of your action items are highlighted for you.

There’s one common pitfall that beginners often fall for: what happens if my A/B test results are flat? Can I do anything with results that don’t point me one way or the other?

If you’re worried about a flat A/B test, remember that all data has its uses. Perhaps you’ve added a feature to drive new business goals, but you’re concerned that old users won’t like it. In this case, flat results give you the green light to go ahead with the feature.

Even if your primary goal was to increase a given metric, flat results could indicate that your hypothesis was wrong. This is a great opportunity to take a fresh approach instead of trying to optimize a tweak that users seem to be apathetic to.

Source: Pexels

If you’ve made it this far, pat yourself on the back: you just completed your first mobile A/B test! Once you grow more familiar with the process, you’ll be able to create tests on multiple variants with complex user segments. Luckily, the fundamentals don’t change, so the skills required to run a basic A/B test will pay dividends in the future.

If you’re looking for a powerful A/B testing platform that’s still intuitive and beginner-friendly, don’t hesitate to request a free Leanplum demo. You can A/B test your content with significant flexibility and depth through Leanplum, so it’s the perfect tool for a beginner to grow into.