Google I/O came to a close last week after a packed three days of announcements, demos, and teasers. At the event in Mountain View California, executives led by CEO Sundar Pichai outlined the company’s future roadmap for Android, Google Assistant, Google Home, virtual reality, and much more.

Android Surpasses 2 Billion Monthly Active Devices

Android’s mobile dominance hasn’t slowed down and won’t anytime soon. Pichai revealed at the beginning of the keynote that the number of monthly active Android devices now exceeds 2 billion. This includes smartphones, tablets, Android Wear devices, Android TVs, and any other devices that are based on the operating system.

Google’s Pichai speaks about the success of Google during the opening minutes of the keynote saying, “It’s a privilege to serve users at this scale.”

Artificial intelligence is going to play a significant role in shaping the how users engage with and experience brands in the years to come. Companies like Google are putting machine learning into the hands of everyday users, making it both accessible and affordable. Therefore, it’s no surprise that artificial intelligence played an important role at Google I/O this year. Comparing this year’s event to last, it’s evident that their products and services are becoming more intelligent, which is affecting the way developers and consumers interact with Google products altogether. Below are some key takeaways from the Google I/O event:

NEW ANDROID O FEATURES

Google revealed the new mobile operating system update for Android, which is in its eighth iteration. Aside from improved battery life, here are the key features of Android O:

Fluid Experiences: Google announced Fluid Experiences in Android O, demonstrating the picture-in-picture mode in O as part of a more “intuitive multitasking setup”.

Notification Dots: This new feature makes Android’s notifications smarter, with the ability to long-press on icons in the launcher to preview details of that app’s alerts.

Autofill: This feature automatically inputs usernames and passwords saved through Chrome on the web, or older devices, into an app a user is setting up on a new device. Machine learning can select phrases when a user double-taps a phrase, name, address, or phone number in Android O.

TensorFlow: Dave Burke, VP of engineering at Google announced a new version of TensorFlow optimized for mobile called TensorFlow Lite. TensorFlow is an open source software library for machine learning developed by Google. The new library will allow developers to build leaner deep learning models designed to run on Android phones.

As Google rolls out even more AI-enabled services that run on Android, it plans to use a dedicated framework that is “faster and less bloated” Burke explains. Google is open sourcing its work and plans to release an API later this year.

Google Play Protect: This is an extension of Verify Apps which codifies security features that have been in Google Play for the past several years. The Android team announced Google Play Protect at this year’s I/O event, comparing it to a virus scanner for Android apps. If there are any issues detected, it will appear in the Google Play app update window.

What’s even more exciting is that Kotlin is the new programming language being added to Google’s products and services, which have natively run Java and C++. The language is expected to simplify coding for Android developers as an alternate option for Java.

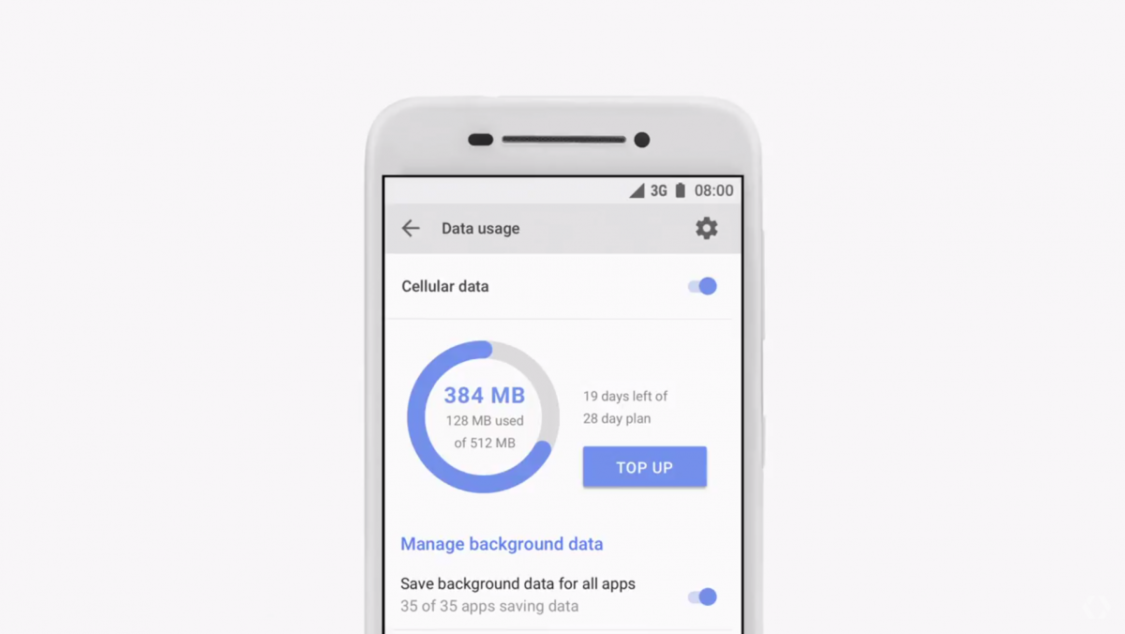

ANDROID GO

With the announcement of a new version of Android called Android Go, Pichai says Google’s going after its “next billion users” on smartphones. Designed for low-powered phones, the streamlined version of Android will have the Google Play Store that will feature optimized apps for these lower-end devices.

Android Go is a lightweight version of the Android OS with a focus on performance rather than quality. With Android Go, data management is at the forefront with a quick settings button for users to manage data allowances.

Every Android release from O will now have a “Go” version for low-memory devices.

ANDROID INSTANT APPS

Last year, Google said their Instant Apps will be rolling out in the coming year. Now, they have finally announced that Android Instant Apps are available to all developers. This functionality provides users with the ability to access and use an app without having to download it on their phone first. When a developer adds support for Instant Apps, tapping a URL will give you access to the Android app. When that URL is tapped, the Google Play Store searches for the app and downloads only the necessary code.

GOOGLE ASSISTANT

The key theme for this year’s I/O event was described by Pichai as a “shift from Mobile-first to AI-first”.

Google’s AI Assistant was also announced which functions similar to a chatbot. Now, users can ask Google Assistant questions or make requests and it will use context to generate results. Some things Google Assistant can help users with include:

- Make quick phone calls

- Send text messages

- Send emails

- Set reminders

- Play music

- Navigate to places

- Ask it anything

Google Assistant is expanding beyond Android and will finally be coming to iPhone. Google Assistant will be a standalone app on iPhone and iPad, offering many of the same functions as Google’s own operating system.

The new Google Assistant SDK allows manufacturers to build Google Assistant into whatever device they want, from cars, TVs, to home appliances, opening up the platform to a wide range of new markets.

Google revealed that 100 million devices now have access to Google Assistant. In addition, Google announced that Lens will have an Assistant integration, allowing users to receive information regarding a photo they took of an object. Which leads us to the next big announcement: Google Lens.

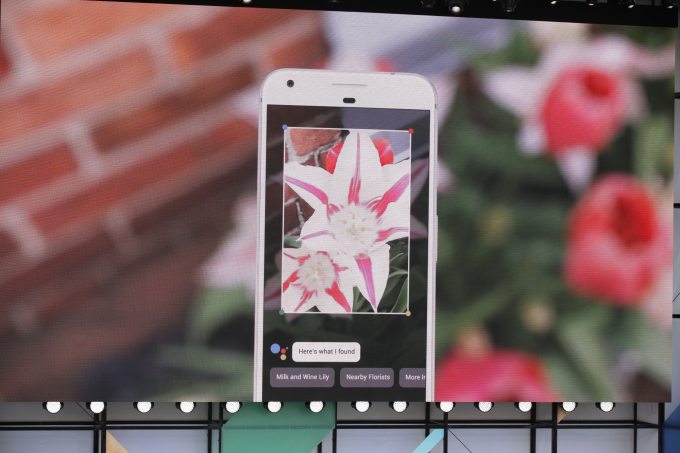

GOOGLE LENS

As mentioned earlier, the theme of this year’s event was focused on an AI-first vision. With Google Lens, Pichai explains, Google is “turning your camera into a search box”.

Google Lens is a set of vision-based computing capabilities that can identify physical objects using Google’s AI technology. Lens will be integrated into Google Assistant and Photos first, before other products.

Sundar Pichai provided the audience with a few examples of Lens’s capabilities, such as identifying flowers, finding a Wifi username and password by scanning a sticker at the back of a modem, and identifying a restaurant building with a simple scan using a smartphone camera. Using this new Lens technology, the Assistant will analyze a user’s surroundings and display relevant content on their screen.

Google isn’t the first company to add artificial intelligence to a smartphone’s camera. In the recent Galaxy 8 launch, Samsung introduced “Bixby Vision”, a sight-based version of Bixby digital assistant. And Snapchat (and now Instagram) are using weak (low-end) AI to apply photo filters to a user’s face. Google, however, offers much more with Lens than just image recognition, shopping, or filters.

GOOGLE HOME

The I/O Developers Conference also introduced a set of new features for the Google Home, the voice-enabled speaker that brings artificial intelligence into the home. The first of these features was ‘proactive support’, which means that Google Home will light up with notifications automatically without the user having to make that request. Since Google Home users are likely to already use services like Gmail, Calendar, or Maps, it can help them answer questions that are relevant to their schedule and lifestyle.

Google Home Going Mobile: Similar to Amazon, Google is turning its smart speaker into a phone. Users will be able to place free calls throughout the United States and Canada.

Google Home is Getting More Useful: Home is also going to be able to control HBO Now, Hulu, SoundCloud, Deezer, and more. Further, Google is opening up access to Home’s Bluetooth radio, meaning users can use it just like any other Bluetooth speaker.

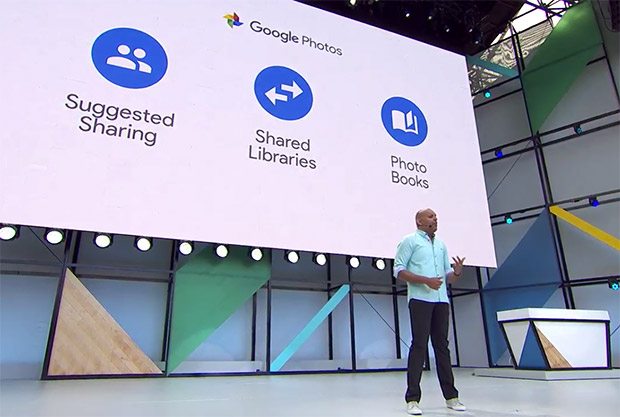

GOOGLE PHOTOS

Google Photos now recommends that you share photos you’ve taken with people that it recognizes as being in the picture. Google calls this Suggested Sharing. It’s also introducing Shared Libraries, which allow groups (such as a family) to collectively add images to a collection more easily.

Google also now offers printed photo books, called Books which can be created directly from your phone. Google will even recommend books to users when it thinks a particular collection makes sense. For the future, Google says that Photos will be able to automatically remove unwanted items from your shots.

VIRTUAL REALITY

In gaming, the big reveal was the announcement of a standalone Daydream VR headset in partnership with HTC and Lenovo. Google’s VR initiatives are expanding beyond Daydream’s current design, which involves strapping your smartphone to the headset. Google announced that the upcoming headsets won’t require a smartphone or PC to power the VR experience. The headsets instead track virtual space with something Google refers to as “WorldSense,” powered by technology from its Tango augmented reality system. This initiative is a huge advancement for virtual reality as everything is contained in the headset rather than an external device. The Daydream VR standalone headsets from HTC and Lenovo will be available later in 2017.

What to Expect Moving Forward

Last year, it wasn’t clear how Google was going to influence the AI revolution. At Goole I/O 2016, VR announcements could all have easily been dismissed as a fad, but this year Google proved their dedication to the emerging technologies with the large number of AI-enabled products they revealed.

Recommended: Daydream and VR: Why the Industry is Growing Faster Than Expected

This year, Google proved what “AI-first” really means for the company. With the focus Google has around a more intuitive user experience, developers are able to interact with users in new ways. It seems likely that this year specifically will mark a major turning point not just for Google, but for the entire industry.